Graph based representations of molecules have been an active area of research in recent years, with downstream applications in property prediction (HOMO-LUMO gap and various energy properties), to generative modeling of the molecules itself.

We'll explore the generative modelling aspect. It's no secret that genrative models (GenAI in popular lexicon) has taken the world by storm in recent years. There has been remarkable progress in image, text, and video generation. And with some additional effort, generating higher representations of the above (such as code and mathematical proofs) have also seen amazing progress.

One (admittedly outdated by now) method of generative models is score models. This post aims to explain what they are and how they have been applied to the task of generating accurate of molecules as a 3D coordinate matrices. In the ML literature, this task is usually referred to as conformer prediction.

Score Models

Suppose we have a dataset . Instead of training to maximize the log-likelihood of the data:

by modelling directly, score models try to learn a model that approximates . The training objective for score models amounts to minimizing the following objective function:

This objective function can be shown to be equivalent to the minimizing the following:

The trace term is computationally prohibitive, and there have been there are some works to approximate it. One approach, called denoising score matching, is when we perturb a data point x via a predefined noise distribution and estimate the score of the perturbed data distribution . The objective to be minimized is now the following:

In the paper Generative Modeling by Estimating Gradients of the Data Distribution, Song et al. sets as a Gaussian with mean and covariance . The score model takes in an additional parameter , hence the name Noise Conditional Score Network.

Since , the loss objective can be simplified to:

In practice, we use Monte-Carlo estimation to compute the expectation across and .

Sampling from a Score Model

Now that we have a trained score-model, how do we actually sample data? Langevin dynamics states that the following formula:

where ensure that will match the data distribution as goes to infinity. In the NCSN framework, we follow an annealed version of this:

Applying Score Models to Molecular Conformation

Shi et al. were able to apply NCSN's to molecular conformation in Learning Gradient Fields for Molecular Conformation Generation. Let's revisit the conformation generation setup. We can represent a molecule as a graph , where each is an atom with various node features (atomic number, charge), and each represents a bond between atoms and . Each edge also has it's own features such as bond type and distance. Our task is given a graph , predict a 3D coordinate matrix .

Conceptually, what we want is to train a score model that estimates . This score model needs to be invariant to translation (shifting coordinates by a constant should yield ths same score), and equivariant to rotations (the gradient should rotate along with the coordinates).

In the paper, they make a pretty clever modelling choice. Instead of training a score model directly on a conformation , they train a score model on the interatomic distances. This method still works because interatomic distances are invariant to rotation and translation. Therefore, standard graph neural networks can be used while implicitly enforcing intrinsitc geometry, since generating accurate distances implicitly enforces certain geometric constraints. The authors also use 2/3-hop neighbors in the edge matrix to enfore even more geometric constraints.

But given a score model for interatomic distances, , how do we compute the score for a conformer? By breaking down , where is a function that computes the interatomic distances and is a GNN taking the molecule features and a distance vector and outputs a conformation or a molecule-level property.

Luckily, these two quantities are related by the chain rule:

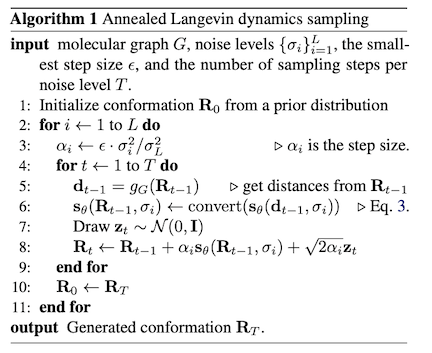

During sampling, we operate on the distances, and use the chain rule to convert our learned score model over distances to a score model over the respective conformer . The sampling pseudocode is outlined below:

On the conformation prediction task, it achieved SOTA results (for the time).

I was able to implement this in JAX. And here is an example of a sample I was able to generate.

Take a look at my implementation of this paper here.